42 soft labels machine learning

Pseudo Labelling - A Guide To Semi-Supervised Learning There are 3 kinds of machine learning approaches- Supervised, Unsupervised, and Reinforcement Learning techniques. Supervised learning as we know is where data and labels are present. Unsupervised Learning is where only data and no labels are present. Reinforcement learning is where the agents learn from the actions taken to generate rewards. Learning classification models with soft-label information Materials and methods: Two types of methods that can learn improved binary classification models from soft labels are proposed. The first relies on probabilistic/numeric labels, the other on ordinal categorical labels. We study and demonstrate the benefits of these methods for learning an alerting model for heparin induced thrombocytopenia.

Label smoothing with Keras, TensorFlow, and Deep Learning This type of label assignment is called soft label assignment. Unlike hard label assignments where class labels are binary (i.e., positive for one class and a negative example for all other classes), soft label assignment allows: The positive class to have the largest probability While all other classes have a very small probability

Soft labels machine learning

Is it okay to use cross entropy loss function with soft labels? The sum is taken over the set of possible class labels. In the case of 'soft' labels like you mention, the labels are no longer class identities themselves, but probabilities over two possible classes. Because of this, you can't use the standard expression for the log loss. But, the concept of cross entropy still applies. Regression - Features and Labels - Python Programming You have a few choice here regarding how to handle missing data. You can't just pass a NaN (Not a Number) datapoint to a machine learning classifier, you have to handle for it. One popular option is to replace missing data with -99,999. With many machine learning classifiers, this will just be recognized and treated as an outlier feature. Learning Soft Labels via Meta Learning - Apple Machine Learning Research The learned labels continuously adapt themselves to the model's state, thereby providing dynamic regularization. When applied to the task of supervised image-classification, our method leads to consistent gains across different datasets and architectures. For instance, dynamically learned labels improve ResNet18 by 2.1% on CIFAR100.

Soft labels machine learning. Multi-Class Neural Networks: Softmax | Machine Learning - Google Developers Multi-Class Neural Networks: Softmax. Recall that logistic regression produces a decimal between 0 and 1.0. For example, a logistic regression output of 0.8 from an email classifier suggests an 80% chance of an email being spam and a 20% chance of it being not spam. Clearly, the sum of the probabilities of an email being either spam or not spam ... How to Label Data for Machine Learning: Process and Tools - AltexSoft Data labeling (or data annotation) is the process of adding target attributes to training data and labeling them so that a machine learning model can learn what predictions it is expected to make. This process is one of the stages in preparing data for supervised machine learning. python - scikit-learn classification on soft labels - Stack Overflow Generally speaking, the form of the labels ("hard" or "soft") is given by the algorithm chosen for prediction and by the data on hand for target. If your data has "hard" labels, and you desire a "soft" label output by your model (which can be thresholded to give a "hard" label), then yes, logistic regression is in this category. [2009.09496] Learning Soft Labels via Meta Learning - arXiv.org Learning Soft Labels via Meta Learning Nidhi Vyas, Shreyas Saxena, Thomas Voice One-hot labels do not represent soft decision boundaries among concepts, and hence, models trained on them are prone to overfitting. Using soft labels as targets provide regularization, but different soft labels might be optimal at different stages of optimization.

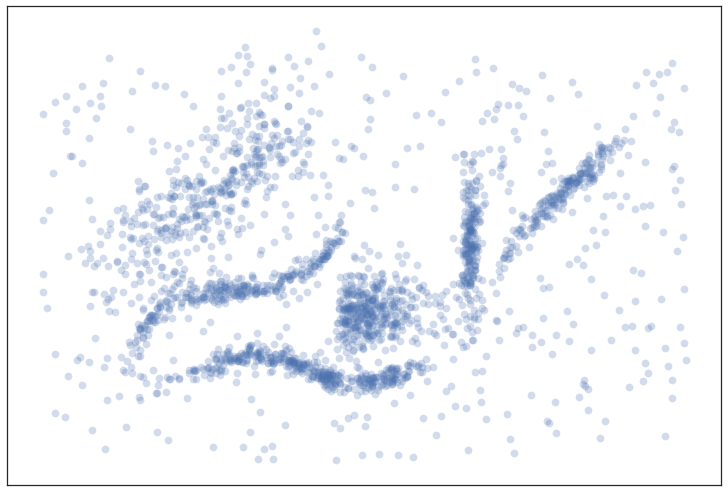

Semi-Supervised Learning With Label Propagation - Machine Learning Mastery Nodes in the graph then have label soft labels or label distribution based on the labels or label distributions of examples connected nearby in the graph. Many semi-supervised learning algorithms rely on the geometry of the data induced by both labeled and unlabeled examples to improve on supervised methods that use only the labeled data. What is the definition of "soft label" and "hard label"? A soft label is one which has a score (probability or likelihood) attached to it. So the element is a member of the class in question with probability/likelihood score of eg 0.7; this implies that an element can be a member of multiple classes (presumably with different membership scores), which is usually not possible with hard labels. Creating targets for machine learning labels - Python Programming Hello and welcome to part 10 (and 11) of the Python for Finance tutorial series. In the previous tutorial, we began to build our labels for our attempt at using machine learning for investing with Python. In this tutorial, we're going to use what we did last tutorial to actually generate our labels when we're ready. Now we're going to create ... Label Smoothing — Make your model less (over)confident Label smoothing is often used to increase robustness and improve classification problems. Label smoothing is a form of output distribution regularization that prevents overfitting of a neural network by softening the ground-truth labels in the training data in an attempt to penalize overconfident outputs. The intuition behind label smoothing is ...

Efficient Learning of Classification Models from Soft-label Information ... soft-label further refining its class label. One caveat of apply- ing this idea is that soft-labels based on human assessment are often noisy. To address this problem, we develop and test a new classification model learning algorithm that relies on soft-label binning to limit the effect of soft-label noise. We ARIMA for Classification with Soft Labels | by Marco Cerliani | Towards ... We have soft targets/labels p ∈ (0, 1) (make sure to clip the targets in [eps, 1 - eps] to avoid instability issues when we take logs). Then fit a regression model. Finally, to do inference, we take the sigmoid of the predictions from the regression model. Sigmoid: source Wikipedia Soft Labels - Etsy Clear Stamp - Transparent Silicone Stamp - Soft Rubber Stamp - For DIY Planner, Journal, Scrapbooking, Deco, Filofax - Love - EM65590. mieryaw. (5,630) $5.60. More colors. Custom clothes tags - laser cut from soft leatherette "vegan leather". Your name and design label to sew onto your creations! 5 colours! PDF Efficient Learning with Soft Label Information and Multiple Annotators Note that our learning from auxiliary soft labels approach is complementary to active learning: while the later aims to select the most informative examples, we aim to gain more useful information from those selected. This gives us an opportunity to combine these two 3 approaches. 1.2 LEARNING WITH MULTIPLE ANNOTATORS

Understanding Deep Learning on Controlled Noisy Labels - Google AI Blog In "Beyond Synthetic Noise: Deep Learning on Controlled Noisy Labels", published at ICML 2020, we make three contributions towards better understanding deep learning on non-synthetic noisy labels. First, we establish the first controlled dataset and benchmark of realistic, real-world label noise sourced from the web (i.e., web label noise ...

How To Label Data for Machine Learning: Data Labelling in Machine Learning & AI - Soft2Share

Robust Machine Reading Comprehension by Learning Soft labels We argue that hard labels limit the model capability on generalization due to the label sparseness problem. In this paper, we propose a robust training method for MRC models to address this problem. Our method consists of three strategies, 1) label smoothing, 2) word overlapping, 3) distribution prediction.

machine learning - What are soft classes? - Cross Validated You can't do that with hard classes, other than create two training instances with two different labels: x -> [1, 0, 0, 0, 0] x -> [0, 0, 1, 0, 0] As a result, the weights will probably bounce back and forth, because the two examples push them in different directions. That's when soft classes can be helpful.

What is the difference between soft and hard labels? 1 comment 90% Upvoted Sort by: best level 1 · 5 yr. ago Hard Label = binary encoded e.g. [0, 0, 1, 0] Soft Label = probability encoded e.g. [0.1, 0.3, 0.5, 0.2] Soft labels have the potential to tell a model more about the meaning of each sample. 5 More posts from the learnmachinelearning community 601 Posted by 2 days ago Tutorial

Labeling images and text documents - Azure Machine Learning Select the image that you want to label and then select the tag. The tag is applied to all the selected images, and then the images are deselected. To apply more tags, you must reselect the images. The following animation shows multi-label tagging: Select all is used to apply the "Ocean" tag.

![Reflections Of The Void: [Links of the Day] 22/10/2019 : Machine learning platform for medical ...](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEhkTAw0QIVLYJ4KMbRhuogl09QhttIxXWAvr3QNqx7B0AGm_wufW52ZaNZdoxhtNaGVYpg6VT57uNgtsFU6j4MYbOggbMaDJ0_4NZKB_Ibrnrcy90WaPDyMhJf6XM3vIS5Z44CB-jEc526L/s1600/maintenance-page_01.gif)

Reflections Of The Void: [Links of the Day] 22/10/2019 : Machine learning platform for medical ...

Label Smoothing - Lei Mao's Log Book In machine learning or deep learning, we usually use a lot of regularization techniques, such as L1, L2, dropout, etc., to prevent our model from overfitting. ... Label smoothing is a regularization technique for classification problems to prevent the model from predicting the labels too confidently during training and generalizing poorly.

An introduction to MultiLabel classification - GeeksforGeeks To use those we are going to use the metrics module from sklearn, which takes the prediction performed by the model using the test data and compares with the true labels. Code: predicted = mlknn_classifier.predict (X_test_tfidf) print(accuracy_score (y_test, predicted)) print(hamming_loss (y_test, predicted))

Features and labels - Module 4: Building and evaluating ML ... - Coursera This module explores the various considerations and requirements for building a complete dataset in preparation for training, evaluating, and deploying an ML model. It also includes two demos—Vision API and AutoML Vision—as relevant tools that you can easily access yourself or in partnership with a data scientist.

PDF Learning Soft Labels via Meta Learning - arXiv One can generate soft labels using distillation [5], but the labels obtained at convergence might not be optimal for all stages of training. Similarly, in settings where training data may contain wrong annotations, the learning process should ideally correct them, without relying on human intervention.

What are labels in machine learning? - Quora Label smoothing is a simple yet effective regularization tool operating on the labels. Label smoothing is a form of output distribution regularization that prevents overfitting of a neural network by softening the ground-truth labels in the training data in an attempt to penalize overconfident outputs. Continue Reading Guilherme Nami

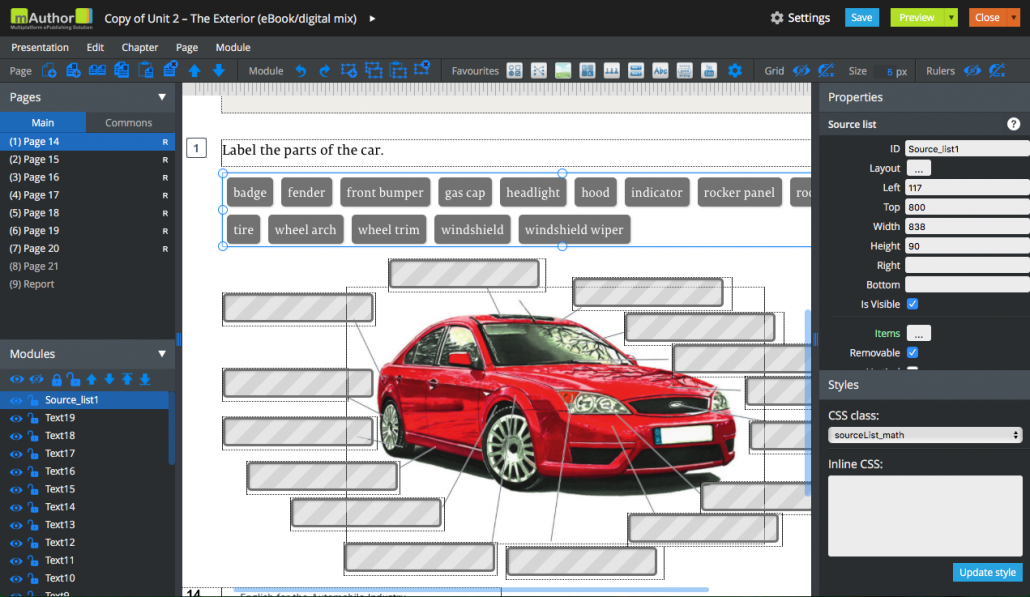

Data Labeling Software: Best Tools for Data Labeling - neptune.ai In machine learning and AI development, the aspects of data labeling are essential. You need a structured set of training data that an ML system can learn from. It takes a lot of effort to create accurately labeled datasets. Data labeling tools come very much in handy because they can automate the labeling process, which […]

Learning Soft Labels via Meta Learning - Apple Machine Learning Research The learned labels continuously adapt themselves to the model's state, thereby providing dynamic regularization. When applied to the task of supervised image-classification, our method leads to consistent gains across different datasets and architectures. For instance, dynamically learned labels improve ResNet18 by 2.1% on CIFAR100.

Regression - Features and Labels - Python Programming You have a few choice here regarding how to handle missing data. You can't just pass a NaN (Not a Number) datapoint to a machine learning classifier, you have to handle for it. One popular option is to replace missing data with -99,999. With many machine learning classifiers, this will just be recognized and treated as an outlier feature.

Is it okay to use cross entropy loss function with soft labels? The sum is taken over the set of possible class labels. In the case of 'soft' labels like you mention, the labels are no longer class identities themselves, but probabilities over two possible classes. Because of this, you can't use the standard expression for the log loss. But, the concept of cross entropy still applies.

Post a Comment for "42 soft labels machine learning"